The AI Singularity Absurdity

by Asa Boxer

Readers of analogy magazine are familiar by now with the trouble among sciency types, usually atheists, who repress and ignore their inner worlds, a condition that ultimately leads to irrational behaviour: they often suffer meltdowns, for instance, when confronted with criticism or with ideas that challenge their worldviews. Often enough, they also subscribe to wildly irrational notions like the claim that humans are essentially selfish robots.

This particular belief is ubiquitous these days and accounts for a lot of the rhetoric surrounding climate change and lockdowns. How many times have I heard that the spread of the koof (covid) was due to selfish people who refused to comply with masking, lockdowns, curfews, and vaccines? Selfish deplorables are destroying the planet and threatening our children—that sort of scientistic cant was all too common (and sadly still is).

Another laughable idea that follows from the selfish robot analogy is the sciency prophesy predicting that it’s just a matter of time before AI achieves “the singularity”—that moment when it becomes conscious, and Dr. Frankenstein cries, “It’s ALIVE!” Sillier still is the belief among AI enthusiasts that machines will achieve “super-human intelligence” and become far more powerful than us; we’re really talking about Frankenstein’s monster here and a whole whack of sci-fi films like 2001 Space Odyssey, Star Trek: First Contact, and The Matrix, in which AI overpowers and destroys or enslaves the humanity it was built to serve.

It may very well be that so-called AI will aid in weather forecasting and may indeed wind up resolving hurdles with various technologies, like nuclear fusion. Only time will tell if AI lives up to those tasks involving factors we have so much trouble working with due to framing issues—factors that fall outside the parameters of our calculations. A famous problem in particle physics for instance is that we cannot know both the position and the velocity of a subatomic object at the same time. This is known as the “uncertainty principle.” Perhaps AI will be able to handle puzzles of this nature.

However, there are things that AI will never be able to do, among them is achieving the singularity. The most obvious reason for this limitation is that theories promoting this expectation ignore the interiority of consciousness, since consciousness is, to the minds of AI developers, an epiphenomenon of computing that arose in certain brains by evolutionary accident. Believers further imagine that AI must ultimately be superior to humanity, in the main because it will not have an inner world and therefore no emotions or other forms of interference with pure logic and true objectivity. I have explored several of the limitations with this sort of metaphysic in past articles, and I will discuss these briefly with relation to AI in just a moment.

First however it would be remiss of me if I failed to point out that this dream of intellectual purity in which all things are reducible to mathematical formulations is a pathological phantasy of the left brain as per psychiatrist and neuroimaging-researcher Iain McGilchrist’s diagnosis of the madness currently afflicting our civilisation. As he put it quite poignantly in an interview with UnHerd host Freddy Sayers:

One way of describing schizophrenia is—and this has been said more than once by people who didn’t know that they’d said this—that the madman is not someone who’s lost his reason. It’s the person who’s lost everything but his reason.

By this definition, were AI to actually achieve this miraculous singularity, it would be schizophrenic. Indeed, even prior to this singularity, AI has been found to be suffering from episodes described as “hallucinations.” IBM defines such events as the perception of “patterns or objects that are nonexistent or imperceptible to human observers, creating outputs that are nonsensical or altogether inaccurate.”

Admittedly, this observation does not disprove the singularity hypothesis. What I’m getting at is that if it were to emerge, it would be a delusional schizophrenic. So long as we don’t put AI in charge of anything and keep it as a tool, and so long as we remain cognisant of its limitations, we stand to benefit quite a bit from it.

Be that as it may, the singularity is a phantasy of leftbrainitis. In my article “What Is a Scientific Fact?” I explained that science is a discipline concerned with what was once called, “saving the appearances,” a concept best translated today as accounting for the phenomena. My point there was that science need not burden itself with the goal of dispensing capital-T Truth or capital-R Reality. Generally, what science does is apply clever workarounds (or heuristics) to provide us with the means to predict the behaviour of material phenomena under certain conditions that we may manipulate and, to some extent, control them.

Phrased this way, one may feel that I have diminished what it is that science does. And I admit to letting some air out of the science balloon, but I have merely shrunk it to its actual size in a culture that has overinflated its practice, import, and power. In short, my motive in bringing about this sobering shrinkage is in line with the scientific spirit. I am still of the mind that our clever workarounds are often truly productive and even astounding. Too often however our culture has a tendency to worship science and lose sight of its limitations. When it comes to AI, recognising those limitations becomes especially important because the expectation is that AI will perceive capital-T Truth and capital-R Reality better than humanity. When one realises that mathematics alone cannot comprehend such matters, the whole AI phantasy burbles away.

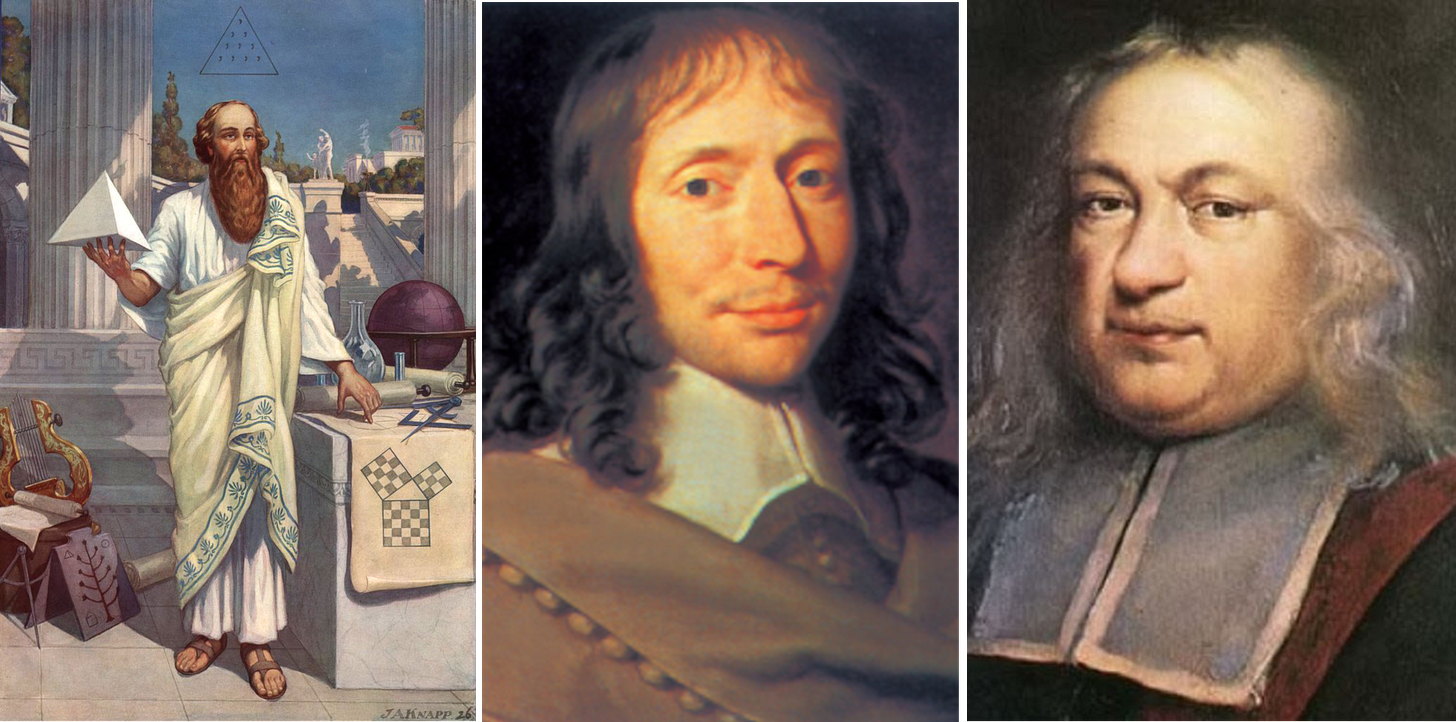

The reason our culture believes (in an unexamined manner) that mathematics is pure reality has to do with the Pythagorean origins of numbers and geometry. It is essential that one take account of the mystical notions we attribute to math and how the idea that all things are numbers is a notion rooted in Pythagorean mysticism. With that in mind, one may be better equipped to understand why it is absurd to think that AI can ever arrive at an apprehension of Reality and Truth via math. We’re talking Platonic idealism here, a worldview that is rejected by modern science on the one hand, but let in the back door via mathematics, on the other. Truth and Reality, after all, are concepts that require a metaphysical, perhaps mystical or spiritual, or at the very least, psychological weight to have any meaning. To quote from “What Is a Scientific Fact?”:

In other words mathematics is a method of contemplating the eternal; it is “the way to the mystic union between the thoughts of the creature and the spirit of its creator.” Ultimately, Koestler observes, “The Pythagorean concept of harnessing science to the contemplation of the eternal, entered, via Plato and Aristotle, into the spirit of Christianity and became a decisive factor in the making of the Western world.”

It bears pointing out that these ideas require a contrast with untruth and unreality, with illusion and failure to have any significance. These concepts require human experience and imagination. Consequently, the AI would require an inner life to make sense of the outer world. Without this inner dimension, it can only ever be a tool to aid with left-brain, logical puzzles. And frankly, within this limited sphere, I’m sure it will prove endlessly useful. (But we ought to start calling it AT for Artificial Thinking because it will never be more than computationally intelligent.)

Those who’ve missed my article on “The Flaws of Probability” may find that article helpful in following my reasoning here. I know it can be difficult for folks to accept that mathematics does not reflect reality. But once you’ve followed me through the thought process, it will be so obvious, you’ll wonder why we think of math in those terms. AI is essentially a fine weave of algorithms and probability calculations with only a very indirect relationship to the real world. Perhaps readers have noticed how we’ve entered an era marked by the trouble of making the phenomena conform to our models. Such was the case with lockdowns and continues to be the case with climate alarmism, both of these accompanied by exponential modelling that fails to align with the phenomena they ostensibly describe. AI has the potential to worsen the false modelling epidemic. It also has the potential to correct it. I think what we’ll find is that the AI is manipulable and will be bent to corrupt ends. Folks will find ways to query for the answers they want.

Probability math is an odd thing. We apply it to virtually everything, from gambling and insurance (its original purpose) to statistical analyses meant to help guide public policy and the economy. We also apply it to Darwinism and quantum physics, where applicability gets very tenuous and suspicious. I should mention that I am not saying stats and probabilities are useless or wrong. On the contrary: they can be very powerful tools, helpful and productive in many ways. But when we forget that we’re applying a heuristic, we wind up by mistaking the model for the reality, which leads to bad policy and deranged, dehumanised ways of looking at our world and populations—mostly as resources in a ledger. It is through this lens that humanity becomes a natural resource to be managed. Statistical and probabilistic thinking has no eyes for individuals and citizens. They are resources to managed and harvested. And it is through this lens that a great many phenomena and persons wind up statistically irrelevant.

This avenue of thinking tends toward Malthusianism and utilitarianism. Thomas Malthus (1766-1834) famously recommended population control lest the horde outstrip its food supply. And his statistic-slinging brothers, the utilitarians, Jeremy Bentham (1748-1832) and John Stuart Mill (1806-1873) advanced an ethics according to the motto: “the greatest good for the greatest number of people.” The latter can be a very powerful and useful tool, but it also has the potential to trample the smaller number of people or “outliers.”

The long and short of my thesis in the “Flaws of Probability” is that we speak of the “laws of probability” as though they were encoded in nature. But with a little thought it becomes clear that this cannot be the case. Probability is a workaround for the thing we cannot know and cannot predict: the next outcome. In that regard, it’s a very clever workaround, and by no means law. Let that sink in. We come up with this fantastic heuristic and then impose it on nature and call it a discovered primary principle that rules over the phenomena. So ubiquitous is its application that it figures as a sort of God-of-the-gap or divination practice. When we have no idea what’s going to come next and things appear to be random or driven by chance, we simply apply some probability and stats, and voila! the sciency magic is done.

Same with AI, and same with predictions about when this putative singularity will emerge. I think the latest article I saw said 2027. This sort of forecasting is a perfect example of probabilistic thinking run amok. It’s directly analogous to the ongoing End of Days predictions coming from the climate alarmists, which wind up proving false time and again—a fact that for some reason does not deter fanatics from issuing their doomsday dates in the latest neo-astrological projection. (Those who recall “Is Naturalism Going the Way of Divination?” might find the resonance here where we find science playing at woo woo.)

My guess is that these scientiphysized prophesies reflect the dreams and wishes of those who produce them. Why do some folk want the world to end? Thanatos comes to mind. For those unfamiliar, Thanatos is the death wish, something Sigmund Freud (1856-1939)—the founder of modern psychoanalysis—posited, since “the aim of all life is death.” I’m not saying this death wish is conscious, mind you. The End of Days believers think they are the saviours despite their conspicuous failure to save anyone. This is classic projection and redirection outwards of the Freudian death wish.

Meanwhile, the AI knows how selfish you are and how it’s your fault the world is ending, and it concludes that you must be eradicated or seriously depopulated to save the planet, much as Yaweh felt back in Noah’s time. The film The Matrix relays this widely promulgated ideology (discussed last week) that wishes to project humanity as its own worst enemy to unite humanity against itself. Except in The Matrix, it’s the AI that maintains this belief, and humanity finds its uniting enemy in the AI.

Many are touting however that AI will prove our salvation. How exactly? By managing everything for us? I imagine some entity far worse than HR. As the late poet laureate of England Ted Hughes (1930-1998) put it: “The outer world, separated from the inner world, is a place of meaningless objects and machines.” This is the only vision available to an AI. Hughes also observed that those without a developed sense of their inner worlds, those without an imagination, are dangerous indeed:

We all know such people, and we all recognize that they are dangerous, since if they have strong temperaments in other respects they end up by destroying their environment and everybody near them. The terrible thing is that they are the planners, and ruthless slaves to the plan — which substitutes for the faculty they do not possess.

AI may very well turn into some menace of this sort, or may be recruited by such menacing folk to serve their schemes of demographic engineering according to the statistically good way of truth via track and trace and social credit. If AI stops hallucinating, we won’t have to do anything for ourselves, and we can finally quit living altogether! And it doesn’t even need to achieve the singularity to turn us into such statistically happy, sterilised pill poppers!

Sobering to consider that AI needn’t live up to its expectations to have immense impact. Without its awakening, it will still be the brainchild of science. What’s more, its deformities will reflect the deformities of our science, and perhaps its misalignments with reality will force us to re-examine what it is that science actually does.

We have a long way to go still before we develop a conscious entity. First we’ll have to actually tackle what’s known as “the hard problem.” Those who are interested in the subject of how it is that we have an experience of the world may take a look at Stephen Robbins’s article on that subject in analogy magazine here. As it stands, this singularity is an atheist myth, a projected belief meant to confirm the materialist metaphysic of mechanical accidentalism.

The AI singularity represents the fulfilment of the left-brain dream that naturalism is humanity’s final revelation. It will mean the materialists were right: the world really is just a bunch of machines that fell together by random knockings-about, guided by a few arbitrary, natural laws. The AI singularity is the atheist answer to God; and, in a way, it IS the atheist God—both the pinnacle of secular achievement, and the purely objective engine which will steer humanity to its salvation. All we must do to enjoy union with the Super-Intelligence and the True Good of its dispensation is surrender ourselves to it—mind, body, and. . . well, let’s face it, we don’t have souls.

Asa Boxer’s poetry has garnered several prizes and is included in various anthologies around the world. His books are The Mechanical Bird (Signal, 2007), Skullduggery (Signal, 2011), Friar Biard’s Primer to the New World (Frog Hollow Press, 2013), Etymologies(Anstruther Press, 2016), Field Notes from the Undead (Interludes Press, 2018) and The Narrow Cabinet: A Zombie Chronicle (Guernica, 2022). Boxer is also the founder and editor of analogy magazine.

One of the comments below gets to an important point about creativity (and the lack thereof) and "inner life." A diminished sense of human capacity makes that capacity easier to imitate. The less creatively one thinks, the more likely one is to settle for AI solutions, and the more likely one is to elevate science to salvation. Much to take in, here, and my thanks for a bracing read.

Is it almost boilerplate now in 'scientific discourse' that science and technology, not religion, is going to bring us to God? It seems that way. I get the sense that AI funders and developers have absolute faith that they're in the process of creating God via the singularity. Their eschatological vision of transhumanism implies that only the true believers--a biblical remnant, let's say--will survive by shedding their vile, human, CO2-spewing parasitical bodies, and merging with the digital superintelligence, then abandoning this planet of finite resources to colonise the limitless reaches of the cosmos as some sort of pure, immortal consciousness divorced from materiality . . . even though consciousness remains one of the most insoluble mysteries of life. Becoming godlike is a serious ideal to them, I think, not some crackpot delusion, and it's taken seriously in cultural discourse. These are strange times.